Hello everyone,

i want to present you an experiment, Fragment, a collaborative and free spectral musical instrument.

Here is a playlist with some videos of Fragment, on most videos Fragment act as a virtual instrument for Renoise:

https://www.youtube.com/playlist?list=PLYhyS2OKJmqe_PEimydWZN1KbvCzkjgeI

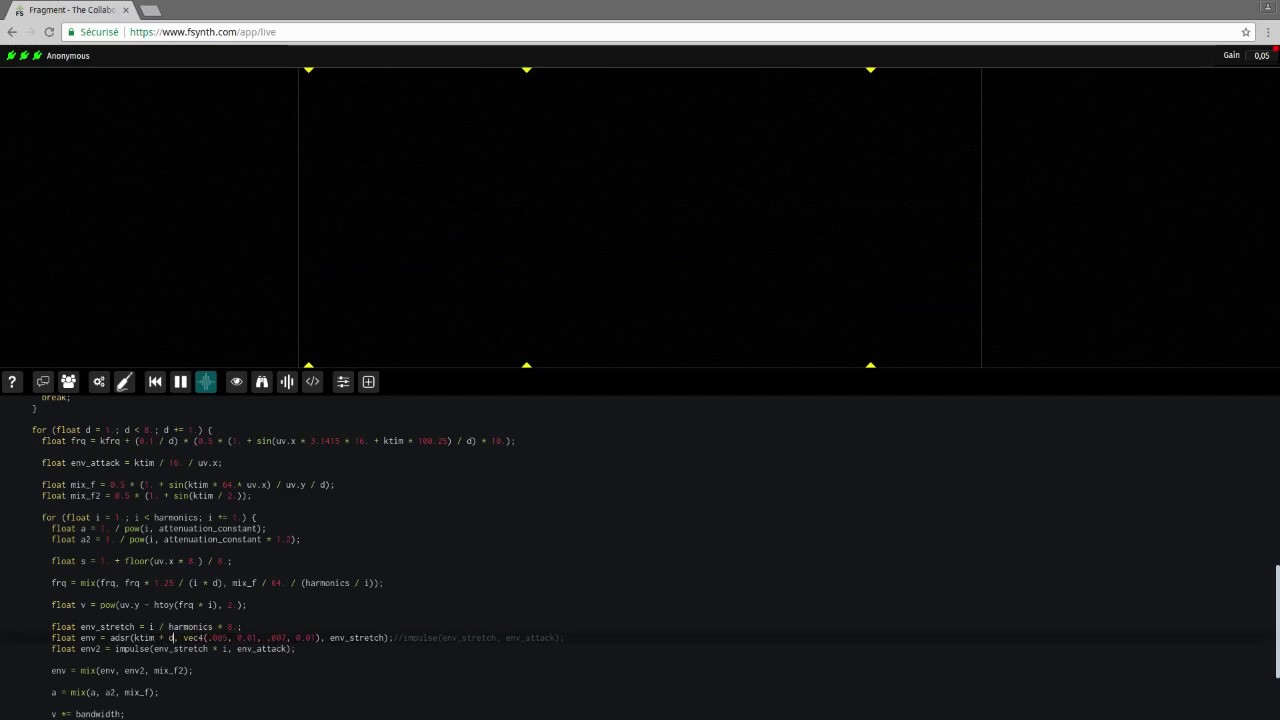

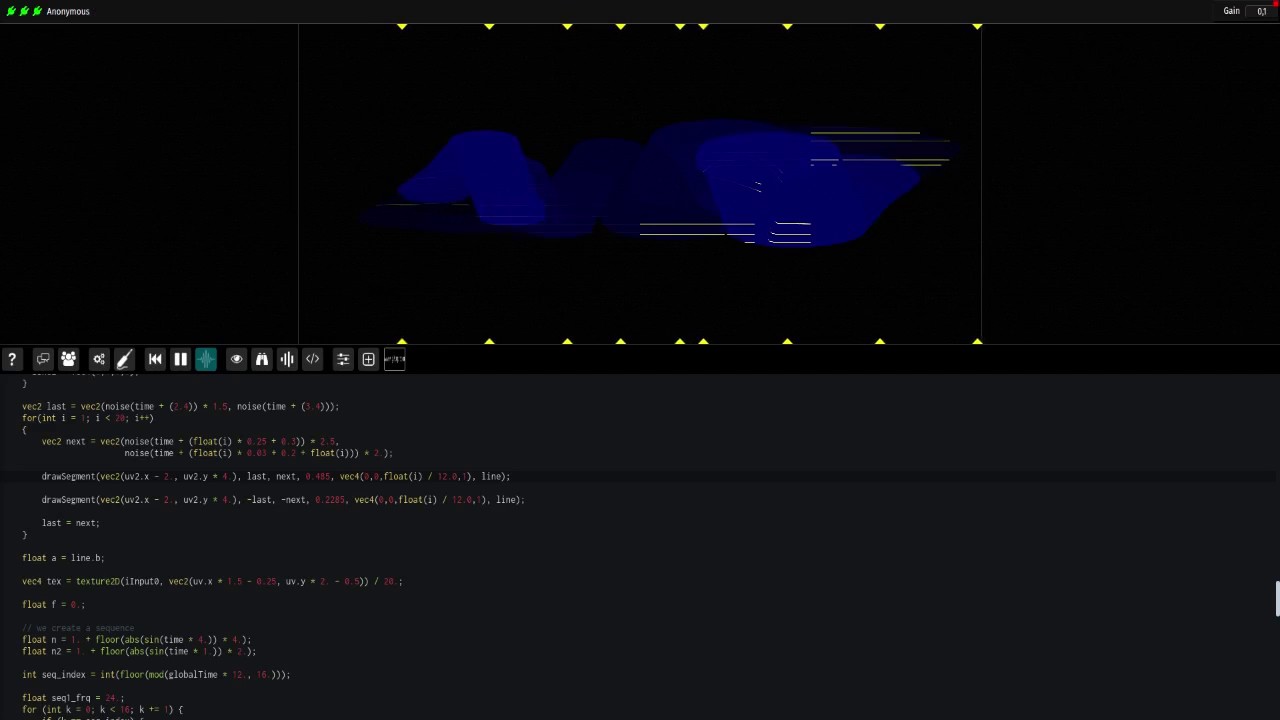

This is first and foremost a “live-coding” additive/spectral synthesizer which associate visuals/audio through direct manipulation of the spectrum, the visuals are generated by a GLSL script (your GPU is producing the visuals) which is shared between users and per sessions, the visuals represent a kind of possibilities space from which you choose what to hear by “slicing it”, spectrum slices are fed to a pure additive synthesis engine in real-time, i believe that some weird sounds can be made easily with this synthesizer, complex sounds can also be made but it may require higher knowledges.

Fragment can also produce visuals synchronized to the produced sounds.

Visuals/sounds can be manipulated by tweaking the script variables directly or through MIDI enabled controls widgets (this is a Chrome and Opera only feature because FireFox does not implement it right now), it is also possible to manipulate the spectrum with your camera or images, that way it is possible to draw a score on a piece of paper or anything else and play it back with your camera or by adding images.

This is mostly web-based but there is a port of the synthesis engine to Linux/Windows/Raspberry which provide crackles free performances in case you have a “slow” CPU, i will also soon release a standalone all-in-one executable for Linux and other platforms which will allow beefy performances directly.

This synth. require a beefy CPU, GPU and some knowledges of GLSL in order to use it, you can follow the example comments for some hints and the help dialog for some helps, a documentation is also available.

If you have any questions, i will be glad to answer them here.

You can try it at: https://www.fsynth.com

Documentation can be found here: www.fsynth.com/documentation