O.k . did some tests , it seems the smaller the buffer size the more accurate .

A low 64 samples being the best , while things get progressively worse from 512 samples with a high step speed ( 1/32nd-1/64 th notes ) , while other hosts like loomer and reaper stay accurate at 512 samples buffersize

test signal =microtonic

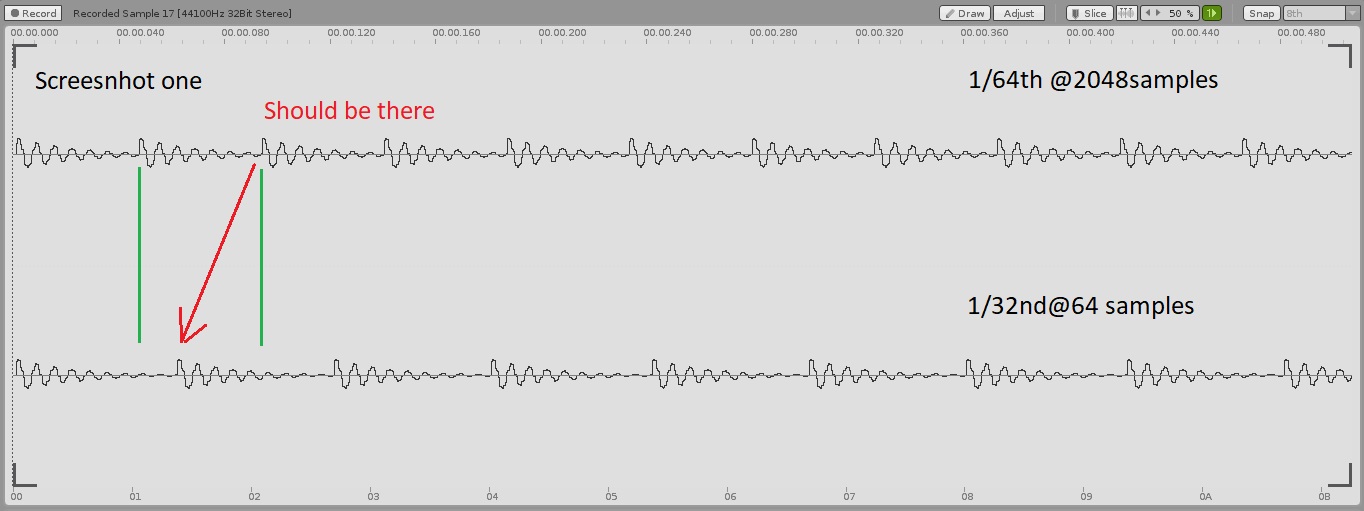

Example a pattern with steps 1/64 @2048 buffer sounds stable , but the steps are not really 1/64 …they are somewhere in between 1/64 and 1/32 ( see screenshot 1 ) where files are alligned

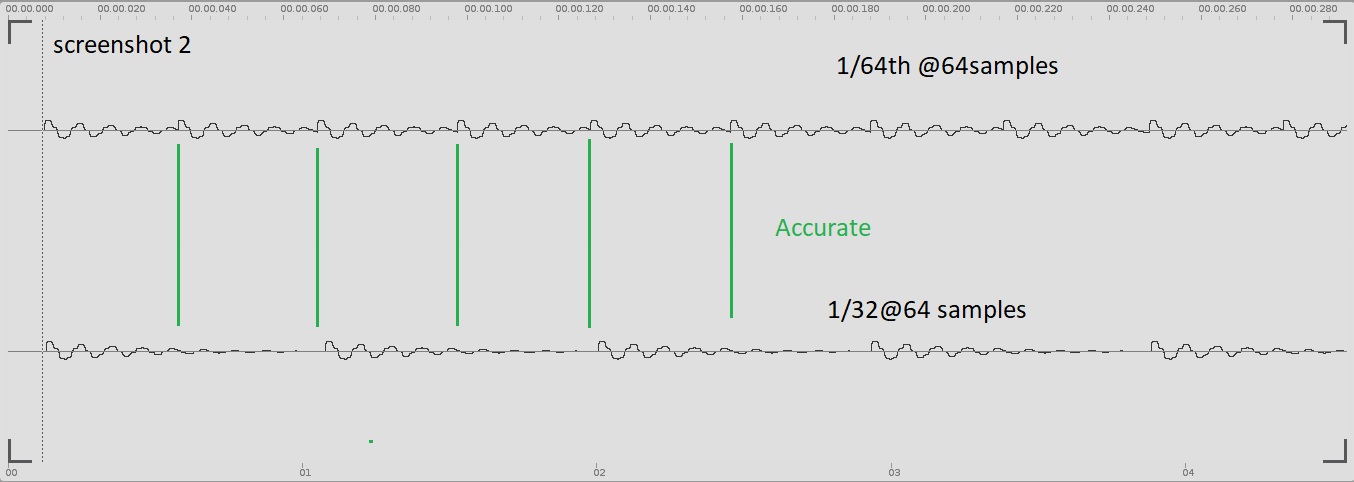

Screen shot 2 shows 1/64 and 1/32 nd both @ 64 samples …and acurate timing

Here’s the file with the descriptions of the used buffer size and the sequencer step length ( cirklon )

https://app.box.com/s/tjycspjp9nm1pvj36tute2xuckryw855